What to do about memory re: crypton/ite?

I’ve been absent for some time due to the need to focus on personal health. It can be a struggle to reach out at times like these, but this last month I’ve not been idle. Herein lie the results of my investigation in the meantime.

In order for botan to provide a palatable alternative to crypton/ite, it is important to understand how people are using it, and what dependencies it implies. One of those dependencies is memory, which supplies a variety of typeclasses and data types regarding low-level pointers and memory. This package, like cryptonite and by the same author, has been archived, and will not be updated in the future.

This is problematic, because even after forking crypton/ite, many libraries still have a transitive dependency on memory. The reason for this is that one of the prominent design choices was to use constraints instead of concrete data types in many of the typeclasses and functions.

For example:

class Cipher cipher where

...

cipherInit :: ByteArray key => key -> CryptoFailable cipher

...

class Cipher cipher => BlockCipher cipher where

...

ecbEncrypt :: ByteArray ba => cipher -> ba -> ba

ecbDecrypt :: ByteArray ba => cipher -> ba -> ba

...

Thankfully, most algorithm-specific outputs are wrapped in a concrete newtype.

hash :: (ByteArrayAccess ba, HashAlgorithm a) => ba -> Digest a

That does seem to be the general rule, that unstructured blobs of binary data be represented a ByteArray, mostly plaintext / ciphertext / key / salt / password -type data, but it can be slightly onerous when parameters are allowed to be heterogenous

bcrypt

:: (ByteArray salt, ByteArray password, ByteArray output)

=> Int -> salt -> password -> output

As a result, unless one is dealing exclusively with ByteString, and / or the data types supplied by crypton/ite itself, use of memory is almost compulsory. Furthermore, if we wish to provide a similar interface using botan as a backend, this means either accepting memory as a dependency, or doing something about it.

As such, I have spent a lot of time familiarizing myself with its guts and how it is used. I really wanted to know the intent behind many of the decisions the author made (such as how the different allocation / create functions need to be used re: inlining and unsafePerformIO) because it doesn’t do to simply copy and paste. Here’s what I have learned:

The following libraries use both crypton/ite and memory, but there are many more.

amazonka-corestacktlspasswordx509

One can look at the reverse dependencies for crypton/ite and memory to see the general cross-section. Thankfully most dependents of crypton/ite found ByteString and the supplied data types sufficient, and so they did not promulgate the issue by depending on memory directly, only indirectly.

So, what is memory?

It is a small, innocuous package, mostly exposing the following classes that allow ByteString and primitive:Data.Primitive.ByteArray-like access to pointers to contiguous memory:

-- Data.ByteArray

class ByteArrayAccess ba where

length :: ba -> Int

withByteArray :: ba -> (Ptr p -> IO a) -> IO a

copyByteArrayToPtr :: ba -> Ptr p -> IO ()

class (Eq ba, Ord ba, Monoid ba, ByteArrayAccess ba) => ByteArray ba where

allocRet :: Int -> (Ptr p -> IO a) -> IO (a, ba)

data Bytes

data ScrubbedBytes

-- Data.ByteArray.Sized

class (ByteArrayAccess c, KnownNat n) => ByteArrayN (n :: Nat) c | c -> n where

allocRet :: forall p a. Proxy n -> (Ptr p -> IO a) -> IO (a, c)

data SizedByteArray (n :: Nat) ba

These classes allow for fast and efficient manipulation of memory, and basically wrap up and classify a lot of low-level functions that GHC uses internally - incredibly powerful, and about as far as you can go without dropping down to using primitive and MagicHash directly.

Notably, the package does not distinguish between pinned and unpinned memory, though the unspoken assumption is that the memory pointed to in withByteArray :: ba -> (Ptr p -> IO a) -> IO a will not be moved for the duration of that operation.

Aside from a few minor quibbles of hierarchy and nomenclature for purposes of consistency with Data.Primitive.ByteArray and Foreign.* and for better separation of bytewise functions vs elementwise functions, it is a useful set of classes. I see no reason to make large changes to it.

So, how is memory being used

I do wish to understand how memory is being used, and so I have gone over many of the direct dependents in order to collate their use cases.

A few particular use cases dominate:

-

ByteArrayAccess and ByteArray classes

-

convert and constEq functions

-

convertToBase functions

Aside from that, there are several errata in the package that we must also consider. Notable:

ScrubbedBytes-

SizedByteArray and ByteArrayN

ByteArrayAccess and ByteArray

By and far, the most-oft used parts of this package are the classes themselves. The major user of these classes is the crypton/ite ecology, and are the core of the package. Enough said - high priority.

convert and constEq

If they aren’t using ByteArray as a general ByteString-like class, directly, chances are they using memory in order to convert between ByteArray-conforming types. This is actually quite sensible, as many cryptography-related packages have some modules / classes / functions for converting various primitives / newtypes to and from bytestrings.

The other common use is to pull in constEq for constant-time equality checks for sensitive information.

Quite often, the memory package is pulled in for just for one or both of these. Between that and the classes themselves, we’ve covered the majority of memory use cases

Base and convertToBase

Seeing occasional use, memory also exposes functionality for converting between various bases in the Data.ByteArray.Encoding module. This is important, but not critical, because there are already packages for converting bytestrings to various bases.

SizedByteArray and ByteArrayN

Seeing infrequent use (eg, NaCl). Essentially a Nat-parameterized ByteArray, it might have been called FiniteByteArray if being consistent with Bits and FiniteBits.

ScrubbedBytes

Seeing infrequent use (eg, NaCl).

memory supplies a ScrubbedBytes class for sensitive memory that protects its Show instance and is automatically zeroed before release. This is a really important but also niche use, and I only observed one or two packages using this functionality.

Here is how the zeroing of memory works for ScrubbedBytes:

getScrubber :: Addr# -> State# RealWorld -> State# RealWorld

getScrubber addr s =

let IO scrubBytes = memSet (Ptr addr) 0 (I# sz)

in case scrubBytes s of

(# s', _ #) -> s'

Essentially, it is using extremely low-level RealWorld magic to dynamically vend a memSet function call in the finalizer of ScrubbedBytes, in an effort to avoid the compiler eliding the zeroing due to the following free (that is, many assemblers will ignore memSet if it is immediately followed by free, because such a memSet is normally considered to be a no-op).

Even if a compiler emits the instructions to zero memory in the compiled binary, that is no guarantee that it will be run, because the CPU may still optimize it out during runtime. As a result, most operating systems provide a specific OS-dependent function for this in order to ensure that the memory is zeroed, and the volatile keyword and memset_explicit function have only recently been properly standardized in C.

I cannot attest to the efficacy of this functionality regarding GHC specifically, but for reference, here is how Botan::secure_scrub_memory works in C++

void secure_scrub_memory(void* ptr, size_t n) {

#if defined(BOTAN_TARGET_OS_HAS_RTLSECUREZEROMEMORY)

::RtlSecureZeroMemory(ptr, n);

#elif defined(BOTAN_TARGET_OS_HAS_EXPLICIT_BZERO)

::explicit_bzero(ptr, n);

#elif defined(BOTAN_TARGET_OS_HAS_EXPLICIT_MEMSET)

(void)::explicit_memset(ptr, 0, n);

#elif defined(BOTAN_USE_VOLATILE_MEMSET_FOR_ZERO) && (BOTAN_USE_VOLATILE_MEMSET_FOR_ZERO == 1)

static void* (*const volatile memset_ptr)(void*, int, size_t) = std::memset;

(memset_ptr)(ptr, 0, n);

#else

volatile uint8_t* p = reinterpret_cast<volatile uint8_t*>(ptr);

for(size_t i = 0; i != n; ++i)

p[i] = 0;

#endif

}

Replacement of this functionality is considered important, but as a low-level priority compared to the higher-priority functionalities.

Other errara

Outside of that, there are portions of memory that see little to no actual use:

- Parser and Packer modules

- Endianness

- Word128

- The SipHash and FNVHash modules

I do not think we need to worry about these for the time being.

So, what do we do about it?

I think it would be wise to provide ByteArray-like functionality in botan for better compatibility with crypton/ite and easier migration, and this assessment helps illustrate what capability needs to be preserved, and what can be ignored.

Thus I am considering creating a slimmed down bytearray-classes package, that plucks out the necessary classes and functions in order to allow us to depend on it instead of memory.

We definitely want the bulk of these modules, maybe ignoring the concrete data types and focusing only on the classes:

- Data.ByteArray

- Data.ByteArray.Encoding

- Data.ByteArray.Sized

- We also probably do want to make some data types a la

Bytes and ScrubbedBytes

Using bytearray-classes

Migration would ideally be simple, as I don’t intend on changing the interfaces much, but this actually leads to a rather thorny problem and important decision - where in botan does this library get used? The answer to that is slightly frustating, and one that gives me no small amount of anxiety:

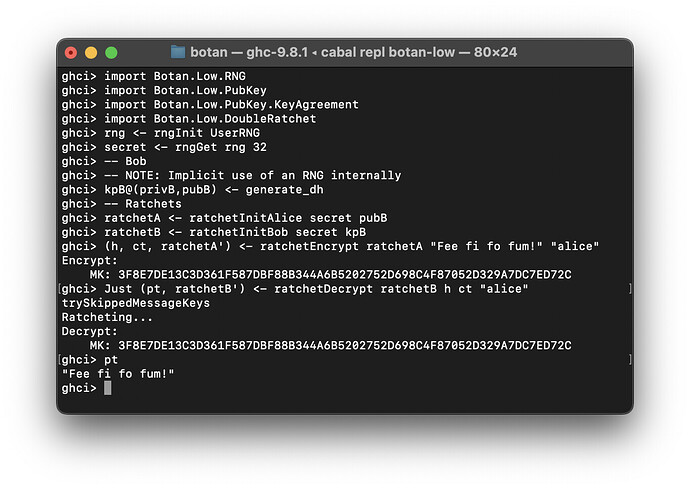

For improving compatibility / ease of migration, the best place to use bytearray-classes and ByteArray constraints like crypton/ite is in botan-low.

The reasoning here is that the concrete ByteString functions can be obtained from the ByteArray functions simply by restricting the types, and we would be able to make all of the internal logic and function generators for allocating ByteStrings much more consistent.

This of course depends on whether people actually prefer a ByteArray constraint over a concrete ByteString parameter at all, as you may find use of TypeApplications suddenly necessary.

:

: